Mini Program

This guide explains how to quickly integrate CloudBase AI capabilities into Mini Programs.

Preparation

- Register a WeChat Mini Program account and create a local mini program project

- Mini Program basic library must be version 3.15.1 or higher, which supports calling CloudBase platform models via

createModel("cloudbase")(DeepSeek, MiniMax, Hunyuan, Kimi, GLM, etc.) - Mini Program must have CloudBase enabled

- Purchase a Token Resource Pack and enable the required models in Console → AI → Text Models

Guides

- Guide 1: Call large models directly to implement text generation

- Guide 2: Implement intelligent conversation through Agent

Guide 1: Call Large Models to Implement Text Generation

In a Mini Program, directly call the text generation capability of large models to implement the simplest text generation. Here's a simple Demo of a "Seven-Character Quatrain" generator as an example:

Step 1: Initialize CloudBase Environment

Initialize the CloudBase environment in your mini program code:

wx.cloud.init({

env: "<CloudBase Environment ID>",

});

Replace "<CloudBase Environment ID>" with your actual CloudBase environment ID. After successful initialization, you can use wx.cloud.extend.AI to call AI capabilities.

Step 2: Create AI Model and Call Text Generation

In Mini Program basic library 3.15.1 and above, using the deepseek-v4-flash model as an example, the mini program code is as follows:

// Create model instance, here we use DeepSeek large model

const model = wx.cloud.extend.AI.createModel("cloudbase");

// Set the AI system prompt, here using seven-character quatrain generation as an example

const systemPrompt =

"Please strictly follow the rules of seven-character quatrains or seven-character regulated verse in creation. Tones must conform to regulations, rhymes should be harmonious and natural, and rhyming characters should be in the same rhyme category. Create content around the user's given theme. A seven-character quatrain has four lines, each with seven characters; a seven-character regulated verse has eight lines, each with seven characters, with the second and third couplets requiring neat correspondence. At the same time, incorporate vivid imagery, rich emotions, and beautiful artistic conception, demonstrating the charm and beauty of classical poetry.";

// User's natural language input, such as 'Write me a poem praising Jade Dragon Snow Mountain'

const userInput = "Write me a poem praising Jade Dragon Snow Mountain";

// Pass the system prompt and user input to the large model

const res = await model.streamText({

data: {

model: "hy3-preview", // Specify the specific model

messages: [

{ role: "system", content: systemPrompt },

{ role: "user", content: userInput },

],

},

});

// Receive the response from the large model

// Since the large model's return result is streamed, we need to loop to receive the complete response text.

for await (let str of res.textStream) {

console.log(str);

}

// Output result:

// "# Ode to Jade Dragon Snow Mountain\n"

// "Snowy peaks soar into clouds at the apex, jade bones and ice skin defy the nine heavens.\n"

// "Snow shadows and misty light enhance the scenery, sacred mountain sanctuary sustains endless charm.\n"

As you can see, with just a few lines of mini program code, you can directly call CloudBase to access the text generation capability of large models.

Guide 2: Implement Intelligent Conversation Through Agent

By calling the text generation interface of large models, you can quickly implement a question-and-answer scenario. However, for complete conversation functionality, just having the input and output of a large model is not enough. You need to transform the large model into a complete Agent to better interact with users.

CloudBase's AI capabilities not only provide raw large model access but also provide Agent access capabilities. Developers can define their own Agents on CloudBase and then call Agents directly from Mini Programs for conversations.

Step 1: Initialize CloudBase Environment

Initialize the CloudBase environment in your mini program code:

wx.cloud.init({

env: "<CloudBase Environment ID>",

});

Replace "<CloudBase Environment ID>" with your actual CloudBase environment ID. After successful initialization, you can use wx.cloud.extend.AI to call AI capabilities.

Step 2: Create an Agent

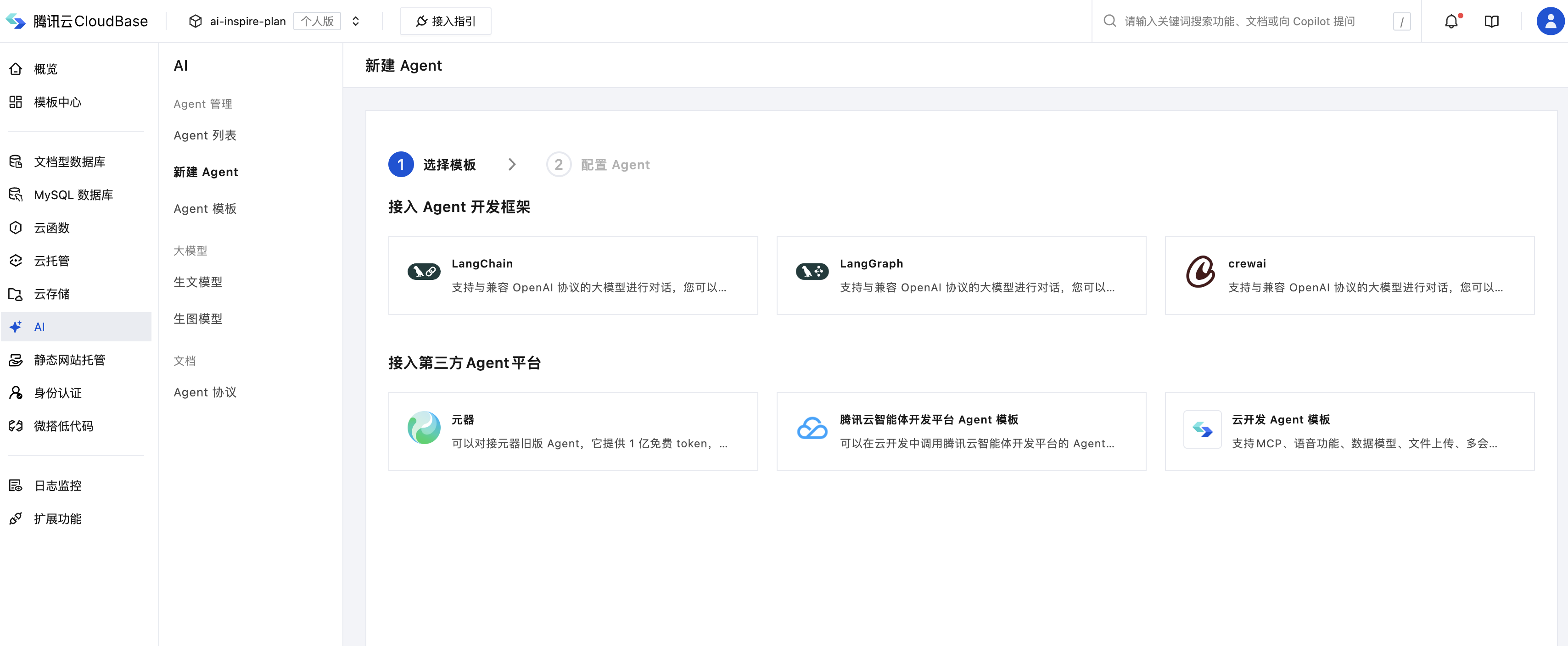

Go to CloudBase Platform - AI, select a template, and create an Agent.

You can create Agents through open-source frameworks like LangChain, LangGraph, or integrate third-party Agents like Tencent Yuanqi, Intelligent Agent Development Platform, Dify, etc.

Copy the AgentID from the page, which is the unique identifier of the Agent and will be used in the code below.

Step 3: Implement Conversation with Agent in Mini Program

We just created an Agent called "Mini Program Development Expert". Let's try chatting with it to see if it can handle common CloudBase error reporting issues. In a Mini Program, use the following code to directly call the Agent we just created and start a conversation:

// User input, here we use an error message as an example

const userInput =

"What does this error in my mini program mean: FunctionName parameter could not be found";

const res = await wx.cloud.extend.AI.bot.sendMessage({

data: {

botId: "<AgentID>", // Agent unique identifier from Step 2

msg: userInput,

},

});

for await (let x of res.textStream) {

console.log(x);

}

// Output result:

// "### Error Explanation\n"

// "**Error message:** `FunctionName \n"

// "parameter could not be found` \n"

// "This error usually means that when calling a function, \n"

// "the specified function name parameter was not found. Specifically, \n"

// "it could be one of the following situations:\n"

// ……